Editor’s be aware: This text, initially revealed on Nov. 15, 2023, has been up to date.

To grasp the newest developments in generative AI, think about a courtroom.

Judges hear and determine instances based mostly on their common understanding of the legislation. Typically a case — like a malpractice go well with or a labor dispute — requires particular experience, so judges ship courtroom clerks to a legislation library, on the lookout for precedents and particular instances they will cite.

Like an excellent decide, massive language fashions (LLMs) can reply to all kinds of human queries. However to ship authoritative solutions — grounded in particular courtroom proceedings or related ones — the mannequin must be offered that info.

The courtroom clerk of AI is a course of known as retrieval-augmented technology, or RAG for brief.

How It Received Named ‘RAG’

Patrick Lewis, lead writer of the 2020 paper that coined the time period, apologized for the unflattering acronym that now describes a rising household of strategies throughout a whole lot of papers and dozens of economic providers he believes characterize the way forward for generative AI.

“We undoubtedly would have put extra thought into the identify had we recognized our work would develop into so widespread,” Lewis stated in an interview from Singapore, the place he was sharing his concepts with a regional convention of database builders.

“We at all times deliberate to have a nicer sounding identify, however when it got here time to write down the paper, nobody had a greater concept,” stated Lewis, who now leads a RAG group at AI startup Cohere.

So, What Is Retrieval-Augmented Era (RAG)?

Retrieval-augmented technology is a way for enhancing the accuracy and reliability of generative AI fashions with info fetched from particular and related information sources.

In different phrases, it fills a niche in how LLMs work. Beneath the hood, LLMs are neural networks, sometimes measured by what number of parameters they comprise. An LLM’s parameters basically characterize the final patterns of how people use phrases to type sentences.

That deep understanding, typically known as parameterized data, makes LLMs helpful in responding to common prompts. Nonetheless, it doesn’t serve customers who need a deeper dive into a particular sort of data.

Combining Inside, Exterior Sources

Lewis and colleagues developed retrieval-augmented technology to hyperlink generative AI providers to exterior sources, particularly ones wealthy within the newest technical particulars.

The paper, with coauthors from the previous Fb AI Analysis (now Meta AI), College Faculty London and New York College, known as RAG “a general-purpose fine-tuning recipe” as a result of it may be utilized by practically any LLM to attach with virtually any exterior useful resource.

Constructing Person Belief

Retrieval-augmented technology provides fashions sources they will cite, like footnotes in a analysis paper, so customers can examine any claims. That builds belief.

What’s extra, the approach may help fashions clear up ambiguity in a consumer question. It additionally reduces the chance {that a} mannequin will give a really believable however incorrect reply, a phenomenon known as hallucination.

One other nice benefit of RAG is it’s comparatively simple. A weblog by Lewis and three of the paper’s coauthors stated builders can implement the method with as few as 5 traces of code.

That makes the strategy quicker and cheaper than retraining a mannequin with further datasets. And it lets customers hot-swap new sources on the fly.

How Folks Are Utilizing RAG

With retrieval-augmented technology, customers can basically have conversations with information repositories, opening up new sorts of experiences. This implies the purposes for RAG could possibly be a number of instances the variety of out there datasets.

For instance, a generative AI mannequin supplemented with a medical index could possibly be a fantastic assistant for a health care provider or nurse. Monetary analysts would profit from an assistant linked to market information.

In reality, nearly any enterprise can flip its technical or coverage manuals, movies or logs into sources known as data bases that may improve LLMs. These sources can allow use instances akin to buyer or area help, worker coaching and developer productiveness.

The broad potential is why firms together with AWS, IBM, Glean, Google, Microsoft, NVIDIA, Oracle and Pinecone are adopting RAG.

Getting Began With Retrieval-Augmented Era

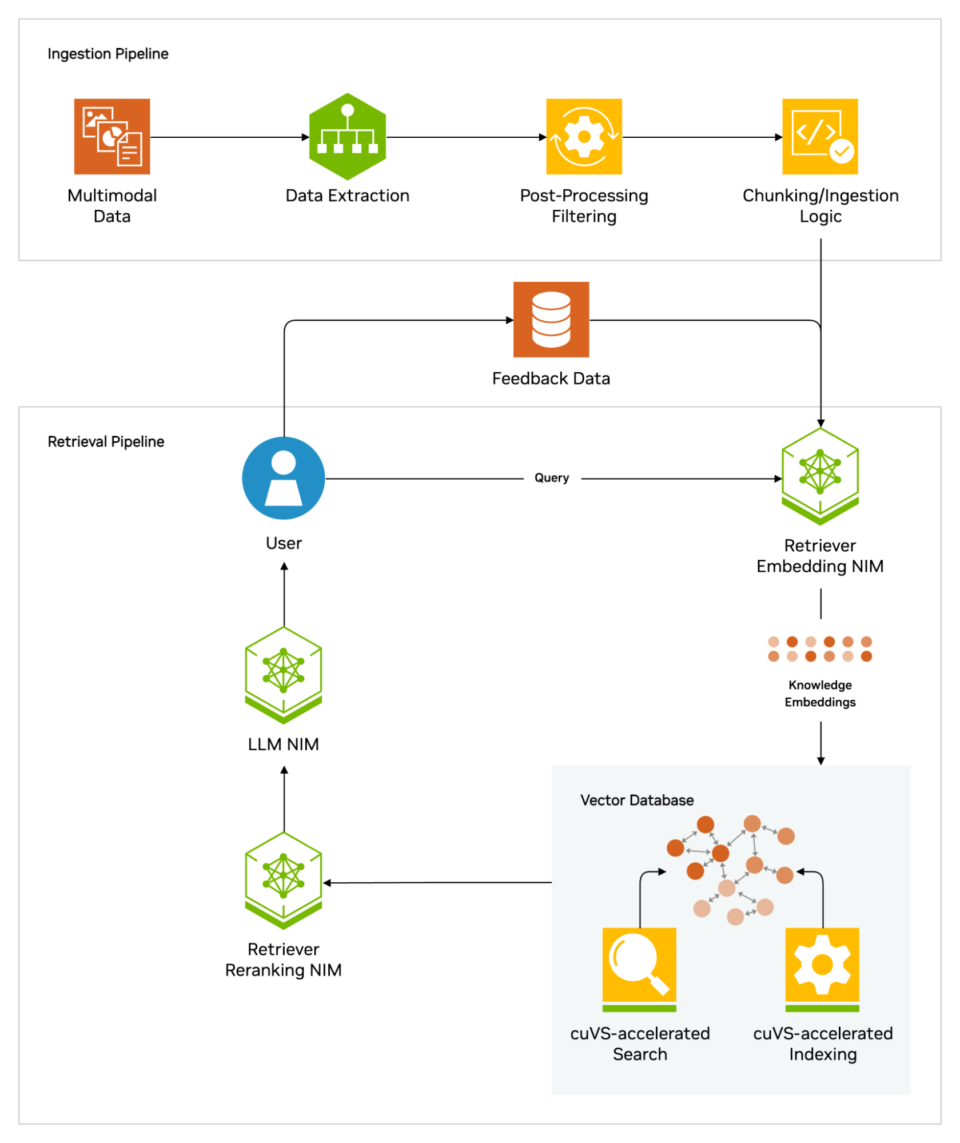

The NVIDIA AI Blueprint for RAG helps builders construct pipelines to attach their AI purposes to enterprise information utilizing industry-leading know-how. This reference structure gives builders with a basis for constructing scalable and customizable retrieval pipelines that ship excessive accuracy and throughput.

The blueprint can be utilized as is, or mixed with different NVIDIA Blueprints for superior use instances together with digital people and AI assistants. For instance, the blueprint for AI assistants empowers organizations to construct AI brokers that may rapidly scale their customer support operations with generative AI and RAG.

As well as, builders and IT groups can attempt the free, hands-on NVIDIA LaunchPad lab for constructing AI chatbots with RAG, enabling quick and correct responses from enterprise information.

All of those sources use NVIDIA NeMo Retriever, which gives main, large-scale retrieval accuracy and NVIDIA NIM microservices for simplifying safe, high-performance AI deployment throughout clouds, information facilities and workstations. These are supplied as a part of the NVIDIA AI Enterprise software program platform for accelerating AI growth and deployment.

Getting the most effective efficiency for RAG workflows requires huge quantities of reminiscence and compute to maneuver and course of information. The NVIDIA GH200 Grace Hopper Superchip, with its 288GB of quick HBM3e reminiscence and eight petaflops of compute, is good — it will probably ship a 150x speedup over utilizing a CPU.

As soon as firms get acquainted with RAG, they will mix a wide range of off-the-shelf or customized LLMs with inner or exterior data bases to create a variety of assistants that assist their workers and clients.

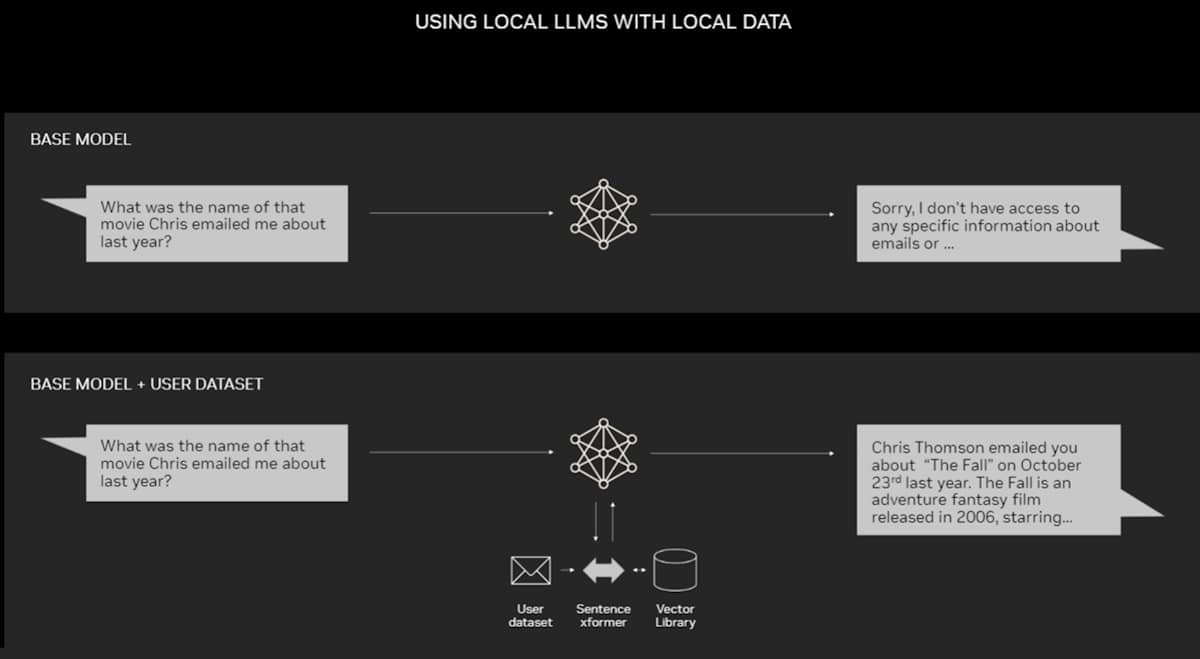

RAG doesn’t require an information heart. LLMs are debuting on Home windows PCs, because of NVIDIA software program that permits all kinds of purposes customers can entry even on their laptops.

PCs geared up with NVIDIA RTX GPUs can now run some AI fashions domestically. Through the use of RAG on a PC, customers can hyperlink to a non-public data supply – whether or not that be emails, notes or articles – to enhance responses. The consumer can then really feel assured that their information supply, prompts and response all stay personal and safe.

A current weblog gives an instance of RAG accelerated by TensorRT-LLM for Home windows to get higher outcomes quick.

The Historical past of RAG

The roots of the approach return at the very least to the early Seventies. That’s when researchers in info retrieval prototyped what they known as question-answering programs, apps that use pure language processing (NLP) to entry textual content, initially in slim matters akin to baseball.

The ideas behind this sort of textual content mining have remained pretty fixed over time. However the machine studying engines driving them have grown considerably, rising their usefulness and recognition.

Within the mid-Nineteen Nineties, the Ask Jeeves service, now Ask.com, popularized query answering with its mascot of a well-dressed valet. IBM’s Watson turned a TV superstar in 2011 when it handily beat two human champions on the Jeopardy! sport present.

Right this moment, LLMs are taking question-answering programs to a complete new stage.

Insights From a London Lab

The seminal 2020 paper arrived as Lewis was pursuing a doctorate in NLP at College Faculty London and dealing for Meta at a brand new London AI lab. The group was trying to find methods to pack extra data into an LLM’s parameters and utilizing a benchmark it developed to measure its progress.

Constructing on earlier strategies and impressed by a paper from Google researchers, the group “had this compelling imaginative and prescient of a educated system that had a retrieval index in the course of it, so it may be taught and generate any textual content output you needed,” Lewis recalled.

When Lewis plugged into the work in progress a promising retrieval system from one other Meta group, the primary outcomes have been unexpectedly spectacular.

“I confirmed my supervisor and he stated, ‘Whoa, take the win. This kind of factor doesn’t occur fairly often,’ as a result of these workflows could be exhausting to arrange appropriately the primary time,” he stated.

Lewis additionally credit main contributions from group members Ethan Perez and Douwe Kiela, then of New York College and Fb AI Analysis, respectively.

When full, the work, which ran on a cluster of NVIDIA GPUs, confirmed tips on how to make generative AI fashions extra authoritative and reliable. It’s since been cited by a whole lot of papers that amplified and prolonged the ideas in what continues to be an lively space of analysis.

How Retrieval-Augmented Era Works

At a excessive stage, right here’s how retrieval-augmented technology works.

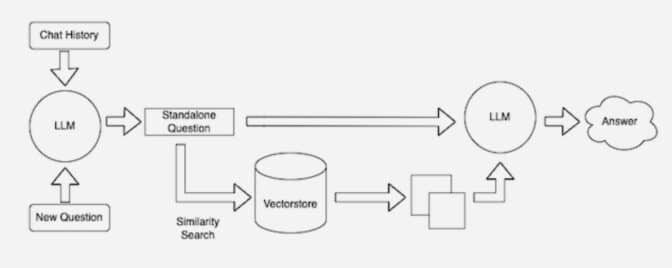

When customers ask an LLM a query, the AI mannequin sends the question to a different mannequin that converts it right into a numeric format so machines can learn it. The numeric model of the question is usually known as an embedding or a vector.

The embedding mannequin then compares these numeric values to vectors in a machine-readable index of an out there data base. When it finds a match or a number of matches, it retrieves the associated information, converts it to human-readable phrases and passes it again to the LLM.

Lastly, the LLM combines the retrieved phrases and its personal response to the question right into a remaining reply it presents to the consumer, doubtlessly citing sources the embedding mannequin discovered.

Retaining Sources Present

Within the background, the embedding mannequin constantly creates and updates machine-readable indices, typically known as vector databases, for brand spanking new and up to date data bases as they develop into out there.

Many builders discover LangChain, an open-source library, could be significantly helpful in chaining collectively LLMs, embedding fashions and data bases. NVIDIA makes use of LangChain in its reference structure for retrieval-augmented technology.

The LangChain neighborhood gives its personal description of a RAG course of.

The way forward for generative AI lies in agentic AI — the place LLMs and data bases are dynamically orchestrated to create autonomous assistants. These AI-driven brokers can improve decision-making, adapt to complicated duties and ship authoritative, verifiable outcomes for customers.