In lots of components of the world, together with main expertise hubs within the U.S., there’s a yearslong wait for AI factories to return on-line, pending the buildout of latest power infrastructure to energy them.

Emerald AI, a startup primarily based in Washington, D.C., is growing an AI answer that would allow the following era of information facilities to return on-line sooner by tapping current power sources in a extra versatile and strategic means.

“Historically, the ability grid has handled information facilities as rigid — power system operators assume {that a} 500-megawatt AI manufacturing facility will at all times require entry to that full quantity of energy,” mentioned Varun Sivaram, founder and CEO of Emerald AI. “However in moments of want, when calls for on the grid peak and provide is brief, the workloads that drive AI manufacturing facility power use can now be versatile.”

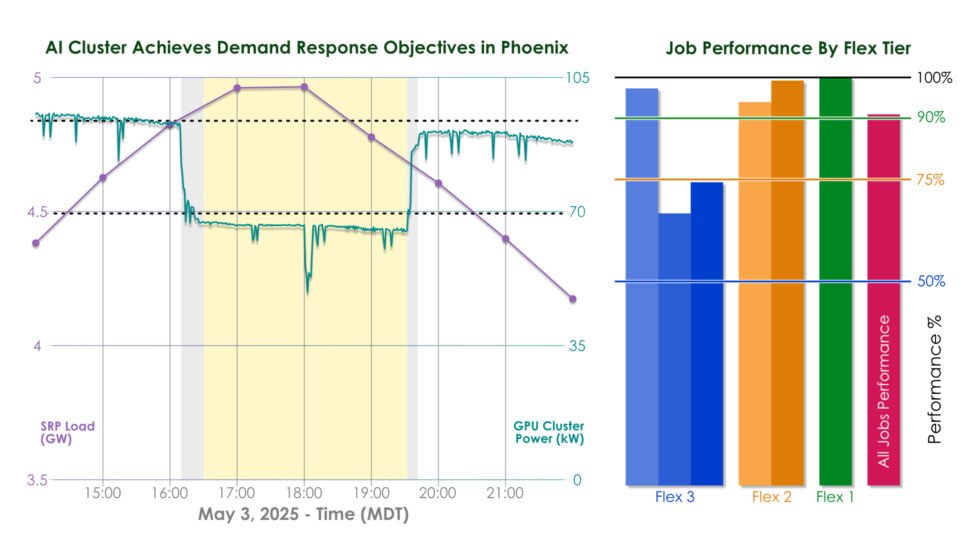

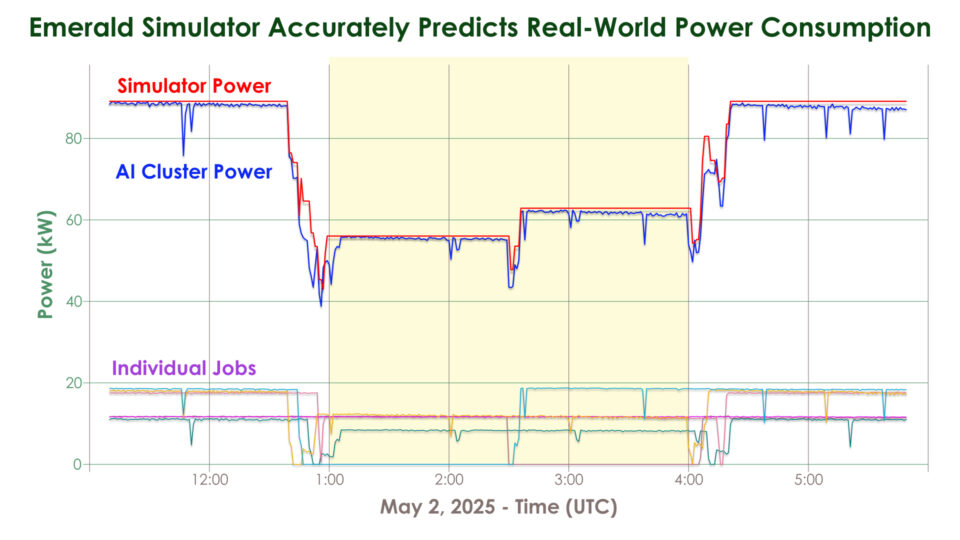

That flexibility is enabled by the startup’s Emerald Conductor platform, an AI-powered system that acts as a sensible mediator between the grid and a knowledge middle. In a current subject take a look at in Phoenix, Arizona, the corporate and its companions demonstrated that its software program can scale back the ability consumption of AI workloads operating on a cluster of 256 NVIDIA GPUs by 25% over three hours throughout a grid stress occasion whereas preserving compute service high quality.

Emerald AI achieved this by orchestrating the host of various workloads that AI factories run. Some jobs could be paused or slowed, just like the coaching or fine-tuning of a giant language mannequin for educational analysis. Others, like inference queries for an AI service utilized by 1000’s and even thousands and thousands of individuals, can’t be rescheduled, however may very well be redirected to a different information middle the place the native energy grid is much less careworn.

Emerald Conductor coordinates these AI workloads throughout a community of information facilities to fulfill energy grid calls for, making certain full efficiency of time-sensitive workloads whereas dynamically lowering the throughput of versatile workloads inside acceptable limits.

Past serving to AI factories come on-line utilizing current energy programs, this capability to modulate energy utilization may assist cities keep away from rolling blackouts, defend communities from rising utility charges and make it simpler for the grid to combine clear power.

“Renewable power, which is intermittent and variable, is less complicated so as to add to a grid if that grid has a lot of shock absorbers that may shift with adjustments in energy provide,” mentioned Ayse Coskun, Emerald AI’s chief scientist and a professor at Boston College. “Information facilities can change into a few of these shock absorbers.”

A member of the NVIDIA Inception program for startups and an NVentures portfolio firm, Emerald AI right now introduced greater than $24 million in seed funding. Its Phoenix demonstration, a part of EPRI’s DCFlex information middle flexibility initiative, was executed in collaboration with NVIDIA, Oracle Cloud Infrastructure (OCI) and the regional energy utility Salt River Mission (SRP).

“The Phoenix expertise trial validates the huge potential of a vital component in information middle flexibility,” mentioned Anuja Ratnayake, who leads EPRI’s DCFlex Consortium.

EPRI can be main the Open Energy AI Consortium, a bunch of power firms, researchers and expertise firms — together with NVIDIA — engaged on AI purposes for the power sector.

Utilizing the Grid to Its Full Potential

Electrical grid capability is often underused besides throughout peak occasions like scorching summer time days or chilly winter storms, when there’s a excessive energy demand for cooling and heating. Meaning, in lots of circumstances, there’s room on the prevailing grid for brand new information facilities, so long as they will quickly dial down power utilization during times of peak demand.

A current Duke College examine estimates that if new AI information facilities may flex their electrical energy consumption by simply 25% for 2 hours at a time, lower than 200 hours a 12 months, they might unlock 100 gigawatts of latest capability to attach information facilities — equal to over $2 trillion in information middle funding.

Placing AI Manufacturing facility Flexibility to the Take a look at

Emerald AI’s current trial was performed within the Oracle Cloud Phoenix Area on NVIDIA GPUs unfold throughout a multi-rack cluster managed by Databricks MosaicML.

“Fast supply of high-performance compute to AI clients is essential however is constrained by grid energy availability,” mentioned Pradeep Vincent, chief technical architect and senior vice chairman of Oracle Cloud Infrastructure, which equipped cluster energy telemetry for the trial. “Compute infrastructure that’s conscious of real-time grid situations whereas assembly the efficiency calls for unlocks a brand new mannequin for scaling AI — quicker, greener and extra grid-aware.”

Jonathan Frankle, chief AI scientist at Databricks, guided the trial’s number of AI workloads and their flexibility thresholds.

“There’s a sure stage of latent flexibility in how AI workloads are sometimes run,” Frankle mentioned. “Usually, a small share of jobs are really non-preemptible, whereas many roles akin to coaching, batch inference or fine-tuning have totally different precedence ranges relying on the person.”

As a result of Arizona is among the many prime states for information middle development, SRP set difficult flexibility targets for the AI compute cluster — a 25% energy consumption discount in contrast with baseline load — in an effort to reveal how new information facilities can present significant reduction to Phoenix’s energy grid constraints.

“This take a look at was a chance to utterly reimagine AI information facilities as useful sources to assist us function the ability grid extra successfully and reliably,” mentioned David Rousseau, president of SRP.

On Could 3, a scorching day in Phoenix with excessive air-conditioning demand, SRP’s system skilled peak demand at 6 p.m. In the course of the take a look at, the information middle cluster diminished consumption steadily with a 15-minute ramp down, maintained the 25% energy discount over three hours, then ramped again up with out exceeding its authentic baseline consumption.

AI manufacturing facility customers can label their workloads to information Emerald’s software program on which jobs could be slowed, paused or rescheduled — or, Emerald’s AI brokers could make these predictions mechanically.

Orchestration choices have been guided by the Emerald Simulator, which precisely fashions system habits to optimize trade-offs between power utilization and AI efficiency. Historic grid demand from information supplier Amperon confirmed that the AI cluster carried out accurately throughout the grid’s peak interval.

Forging an Power-Resilient Future

The Worldwide Power Company tasks that electrical energy demand from information facilities globally may greater than double by 2030. In gentle of the anticipated demand on the grid, the state of Texas handed a legislation that requires information facilities to ramp down consumption or disconnect from the grid at utilities’ requests throughout load shed occasions.

“In such conditions, if information facilities are in a position to dynamically scale back their power consumption, they may have the ability to keep away from getting kicked off the ability provide solely,” Sivaram mentioned.

Trying forward, Emerald AI is increasing its expertise trials in Arizona and past — and it plans to proceed working with NVIDIA to check its expertise on AI factories.

“We are able to make information facilities controllable whereas assuring acceptable AI efficiency,” Sivaram mentioned. “AI factories can flex when the grid is tight — and dash when customers want them to.”

Be taught extra about NVIDIA Inception and discover AI platforms designed for energy and utilities.