NVIDIA and Google Cloud are increasing entry to accelerated computing to rework the total spectrum of enterprise workloads, from visible computing to agentic and bodily AI.

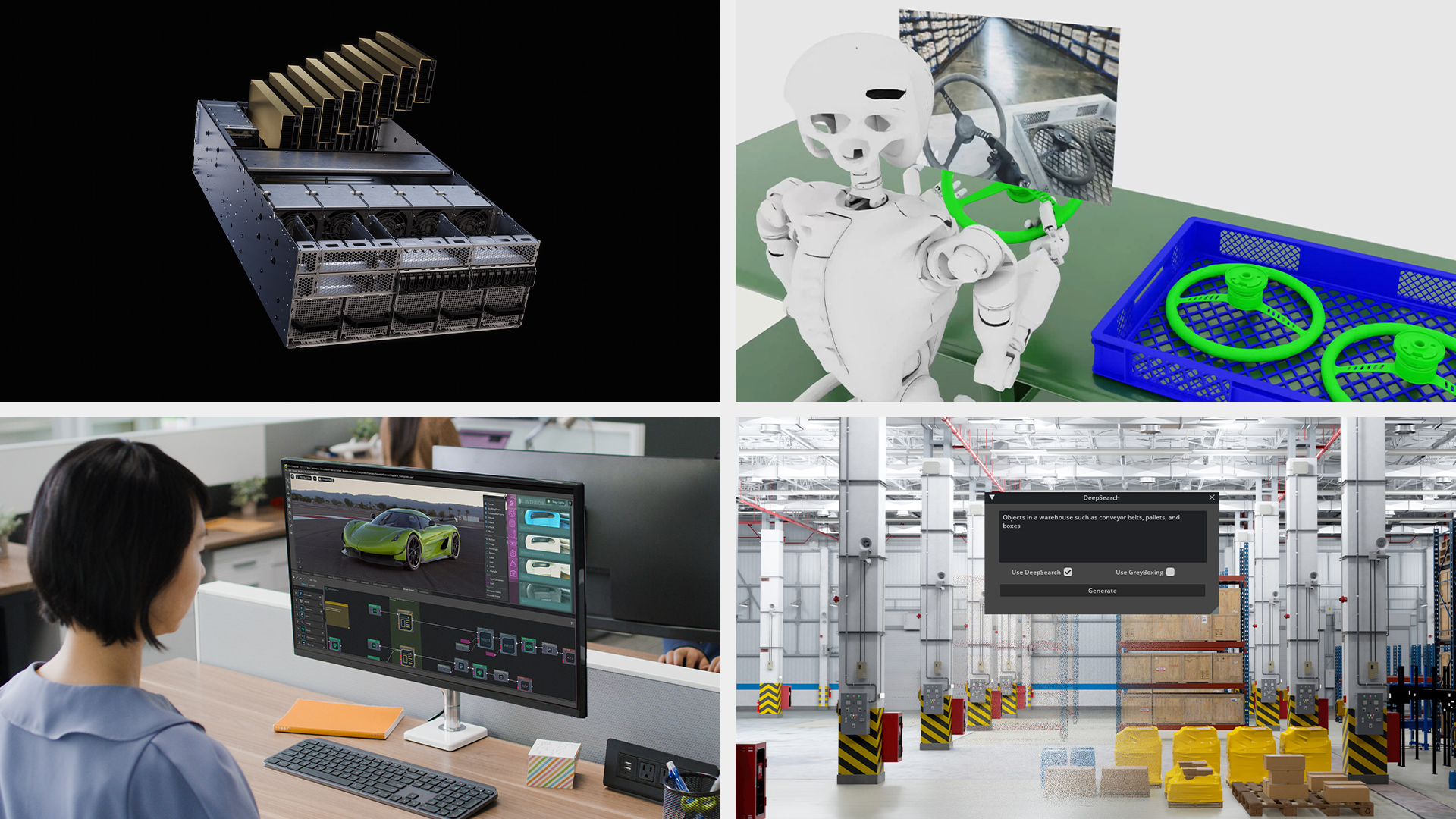

Google Cloud right now introduced the overall availability of G4 VMs, powered by NVIDIA RTX PRO 6000 Blackwell Server Version GPUs. Plus, NVIDIA Omniverse and NVIDIA Isaac Sim are actually accessible as digital machine photographs (VMIs) on the Google Cloud Market to unlock bodily AI-driven purposes for key industries like manufacturing, automotive and logistics.

This highly effective mixture creates a flexible, multi-workload platform for enterprises to speed up their most demanding challenges on Google Cloud.

NVIDIA RTX PRO 6000 Blackwell GPUs excel at high-performance AI inference for multimodal, generative and agentic AI deployments, whereas additionally powering advanced visible and simulation workloads starting from computer-aided engineering and content material creation to robotics simulation.

Prospects like WPP are utilizing G4 VMs with NVIDIA Omniverse to immediately generate photorealistic 3D promoting environments at international scale, whereas Altair is utilizing the platform inside Altair One to speed up demanding simulation and fluid dynamics workloads.

The G4 VM: A Common Platform for AI and Visible Computing

On the core of the brand new G4 VM is the NVIDIA RTX PRO 6000 Blackwell Server Version GPU, the final word information middle GPU for AI and visible computing.

Constructed on the NVIDIA Blackwell structure, it serves as a common platform for a broad vary of workloads. Its design uniquely combines two highly effective engines:

- Fifth-Era Tensor Cores that ship a large leap in AI efficiency, supporting new information codecs like FP4 to allow quicker efficiency with decrease reminiscence utilization.

- Fourth-Era RT Cores that present over 2x the real-time ray-tracing efficiency over the earlier technology, enabling cinematic-quality graphics and photorealistic simulations.

On Google Cloud, these G4 VMs are constructed for enormous scale, configurable with as much as eight RTX PRO 6000 GPUs — totaling 768 GB of GDDR7 reminiscence — and supported by high-throughput native and community storage.

As a part of Google Cloud’s AI Hypercomputer structure, G4 VMs natively combine with providers like Google Kubernetes Engine and Vertex AI to simplify containerized deployments and streamline machine studying operations for AI workloads. This flexibility extends to accelerating large-scale information analytics on Apache Spark and Hadoop with Dataproc.

For design and simulation, the VMs additionally assist a broad ecosystem of fashionable third-party engineering and graphics purposes like Autodesk AutoCAD, Blender and Dassault SolidWorks.

Industrial Digitalization at Scale With NVIDIA Omniverse

Google Cloud clients can now faucet into NVIDIA Omniverse, a set of integration-ready libraries and frameworks for growing industrial digitalization purposes constructed on Common Scene Description (OpenUSD).

With the provision of the Omniverse VMI and G4 VMs on Google Cloud, enterprises can:

- Construct and Function Digital Twins: Simply create bodily correct, real-time digital replicas of factories and merchandise to simulate and optimize operations. These workflows are powered by the NVIDIA Cosmos world basis mannequin platform and NVIDIA Omniverse Blueprints for creating digital twins.

- Speed up Robotics Improvement: Use NVIDIA Isaac Sim, a reference utility constructed on Omniverse, to coach, simulate and validate AI-driven robots in physics-based digital environments earlier than deployment.

NVIDIA AI Accelerates Each Enterprise Workload

Along with Omniverse, Google Cloud clients can use the total NVIDIA software program stack to speed up a spread of high-demand workloads, together with:

- Agentic AI: Builders can use the NVIDIA Nemotron household of open reasoning fashions and NVIDIA Blueprints to get began rapidly with constructing refined AI brokers that may purpose and act. For deployment, NVIDIA NIM — a set of easy-to-use microservices — offers optimized, high-performance inference for AI fashions with enterprise-grade safety and assist.

- Scientific and Excessive-Efficiency Computing: Clear up advanced issues in fields like drug discovery and genomics utilizing NVIDIA CUDA-X libraries and microservices. On the RTX PRO 6000 Blackwell GPU, core genomics algorithms utilized in sequence alignment can see as much as 6.8x quicker throughput in contrast with the earlier technology.

- Design and Visible Computing: Energy distant artistic and design pipelines with NVIDIA RTX Digital Workstation software program, which delivers high-performance digital workstation situations from G4 VMs to any gadget, anyplace.

These newest bulletins set up an entire, end-to-end platform constructed on the NVIDIA Blackwell platform — from NVIDIA GB200 NVL72 (A4X VMs) and NVIDIA HGX B200 (A4 VMs) for massive-scale AI coaching and inference, to the RTX PRO 6000 Blackwell for AI inference and visible computing on G4 VMs.

This unified structure offers a seamless expertise for accelerating each workload, empowering enterprises to sort out advanced, multistage pipelines from information analytics to bodily AI inside a single, constant cloud ecosystem.

Be taught extra about G4 VMs on Google Cloud, and deploy the NVIDIA Omniverse and Isaac Sim VMIs from the Google Cloud Market. Discover NVIDIA Nemotron fashions and NVIDIA Blueprints