NVIDIA Blackwell’s scale-up capabilities set the stage to scale out the world’s largest AI factories.

The NVIDIA Blackwell structure is the reigning chief of the AI revolution.

Many consider Blackwell as a chip, however it could be higher to consider it as a platform powering large-scale AI infrastructure.

Surging Demand and Mannequin Complexity

Blackwell is the core of a complete system structure designed particularly to energy AI factories that produce intelligence utilizing the biggest and most complicated AI fashions.

In the present day’s frontier AI fashions have tons of of billions of parameters and are estimated to serve almost a billion customers per week. The following era of fashions are anticipated to have effectively over a trillion parameters — and are being educated on tens of trillions of tokens of knowledge drawn from textual content, picture and video datasets.

Scaling out an information middle — harnessing as much as 1000’s of computer systems to share the work — is critical to satisfy this demand. However far larger efficiency and power effectivity can come from first scaling up: by making an even bigger laptop.

Blackwell redefines the boundaries of simply how massive we will go.

Exponential development of parameters in notable AI fashions over time.

Knowledge Supply: Epoch (2025), with main processing by Our World In Knowledge

In the present day’s Most Difficult Type of Computing

AI factories are the machines of the following industrial revolution. Their work is AI inference — probably the most difficult type of computing identified in the present day — and their product is intelligence.

These factories require infrastructure that may adapt, scale out and maximize each little bit of compute useful resource out there.

What does that seem like?

A symphony of compute, networking, storage, energy and cooling — with integration on the silicon and techniques ranges, up and down racks — orchestrated by software program that sees tens of 1000’s of Blackwell GPUs as one.

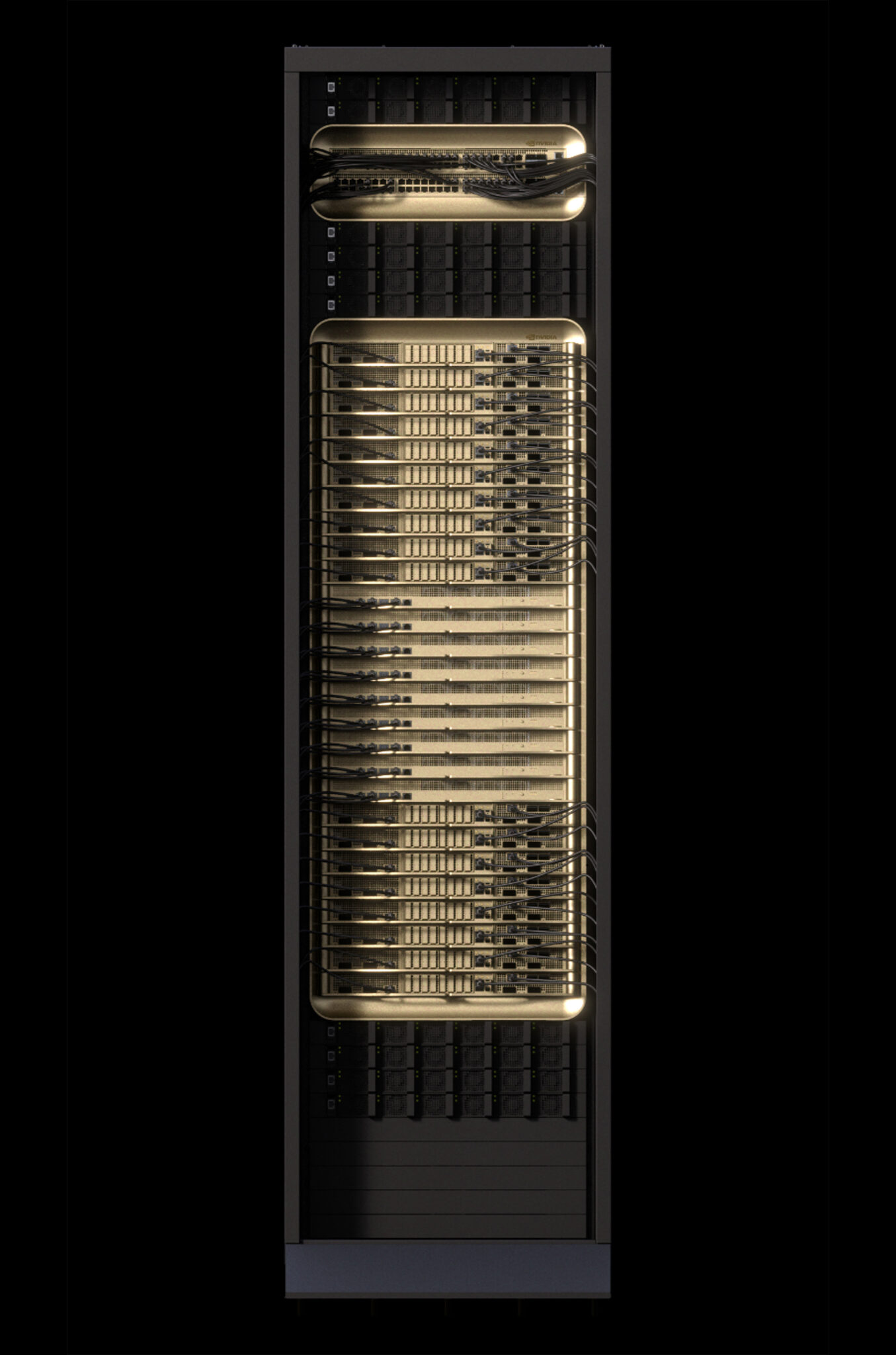

The brand new unit of the information middle is NVIDIA GB200 NVL72, a rack-scale system that acts as a single, large GPU.

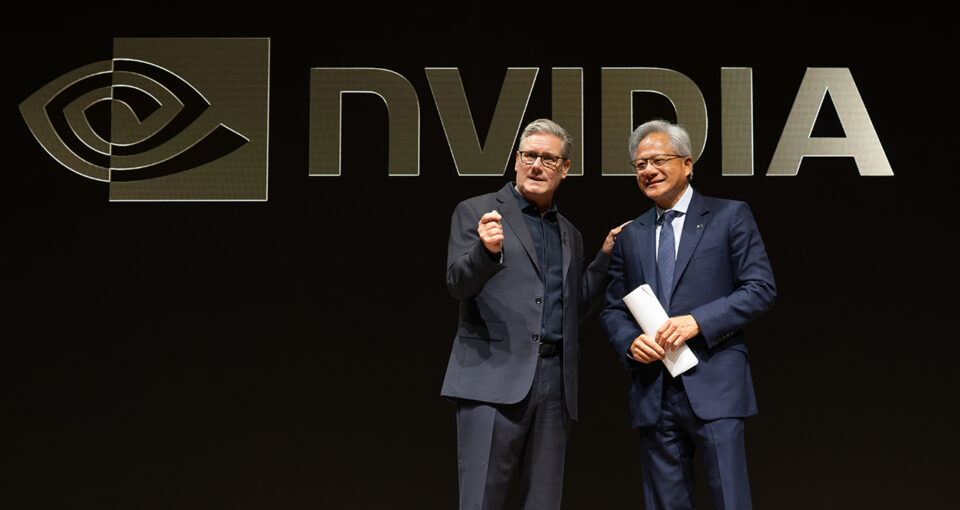

NVIDIA CEO Jensen Huang reveals off the NVIDIA GB200 NVL72 system and the NVIDIA Grace Blackwell superchip throughout his keynote at CES 2025.

Start of a Superchip

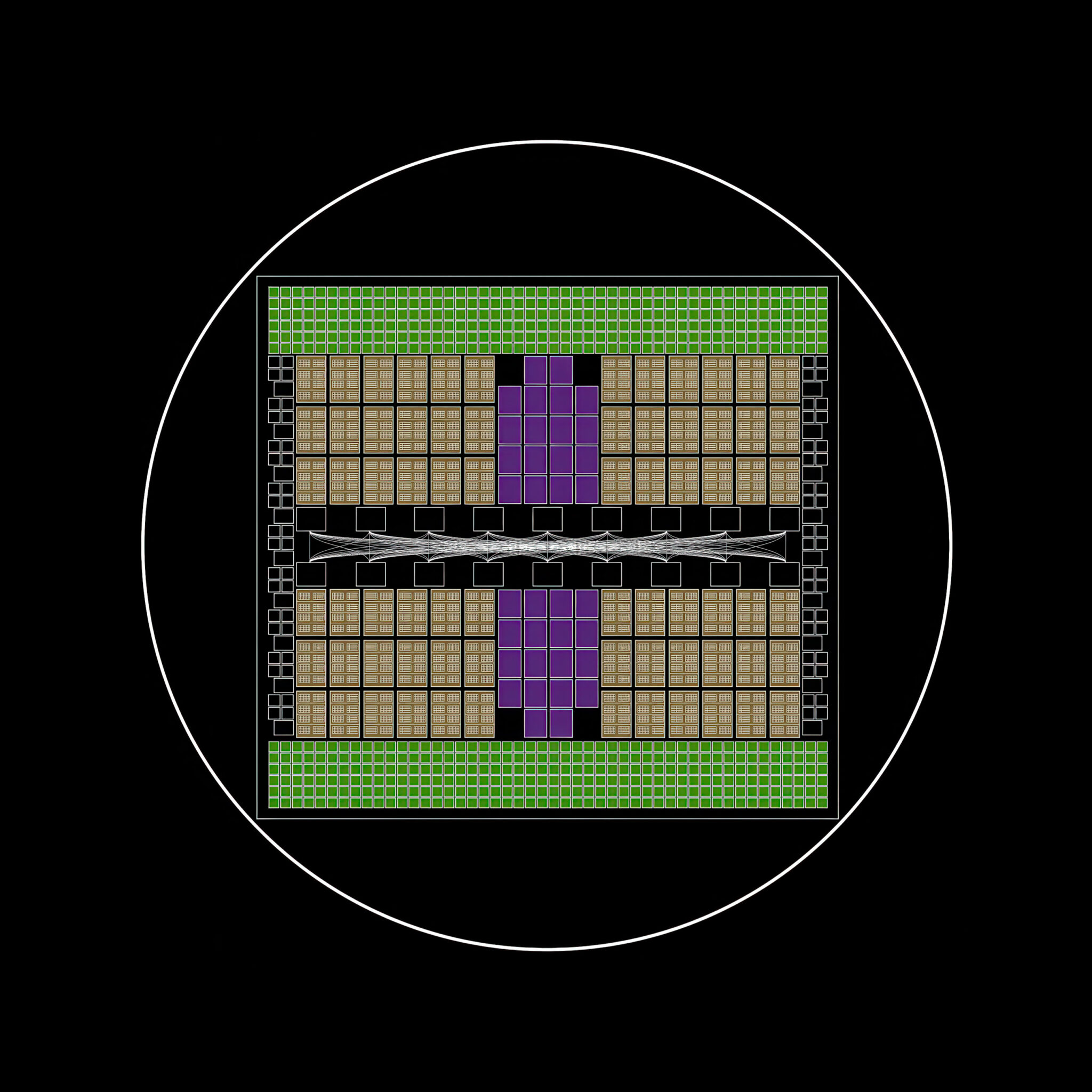

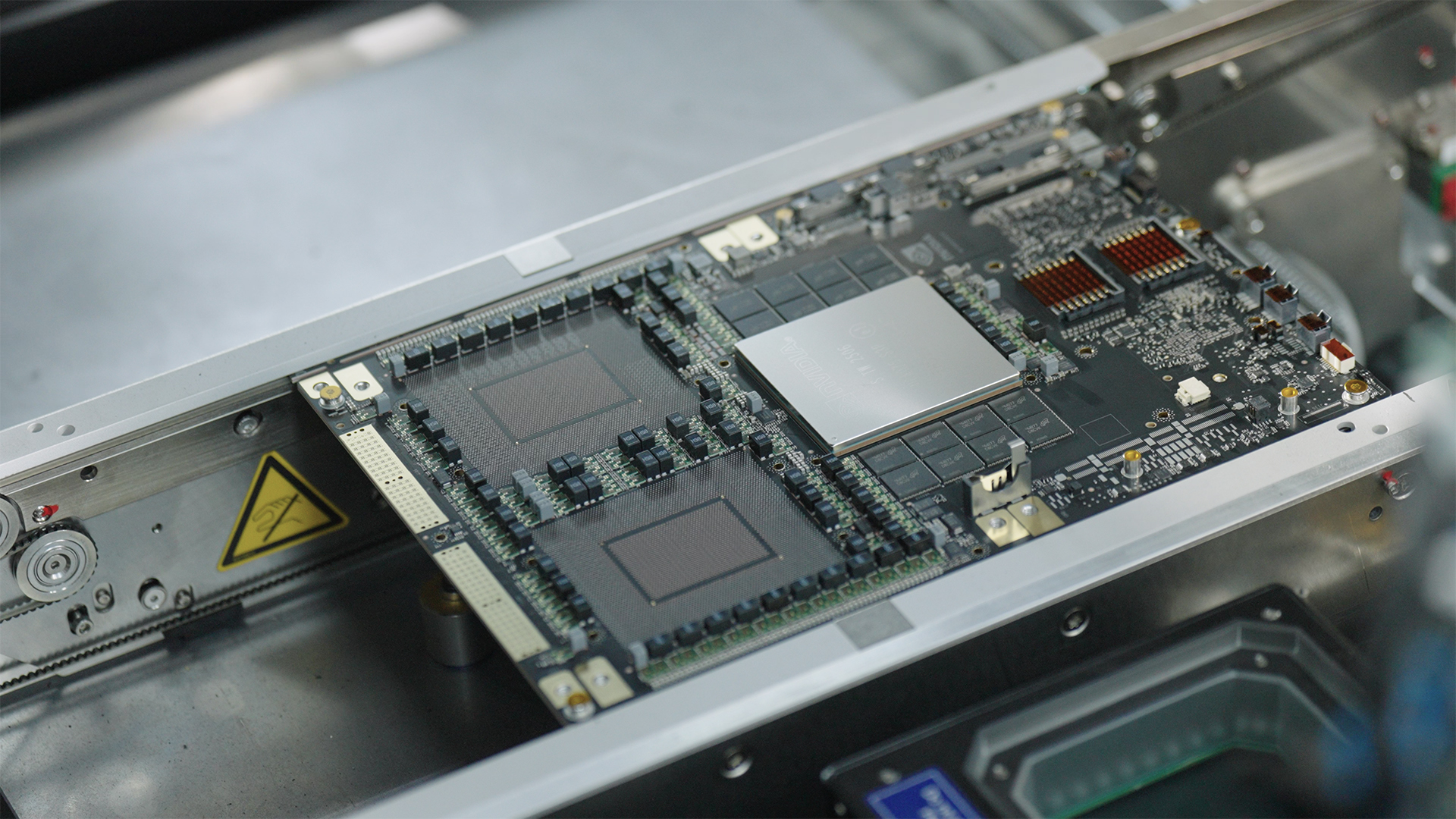

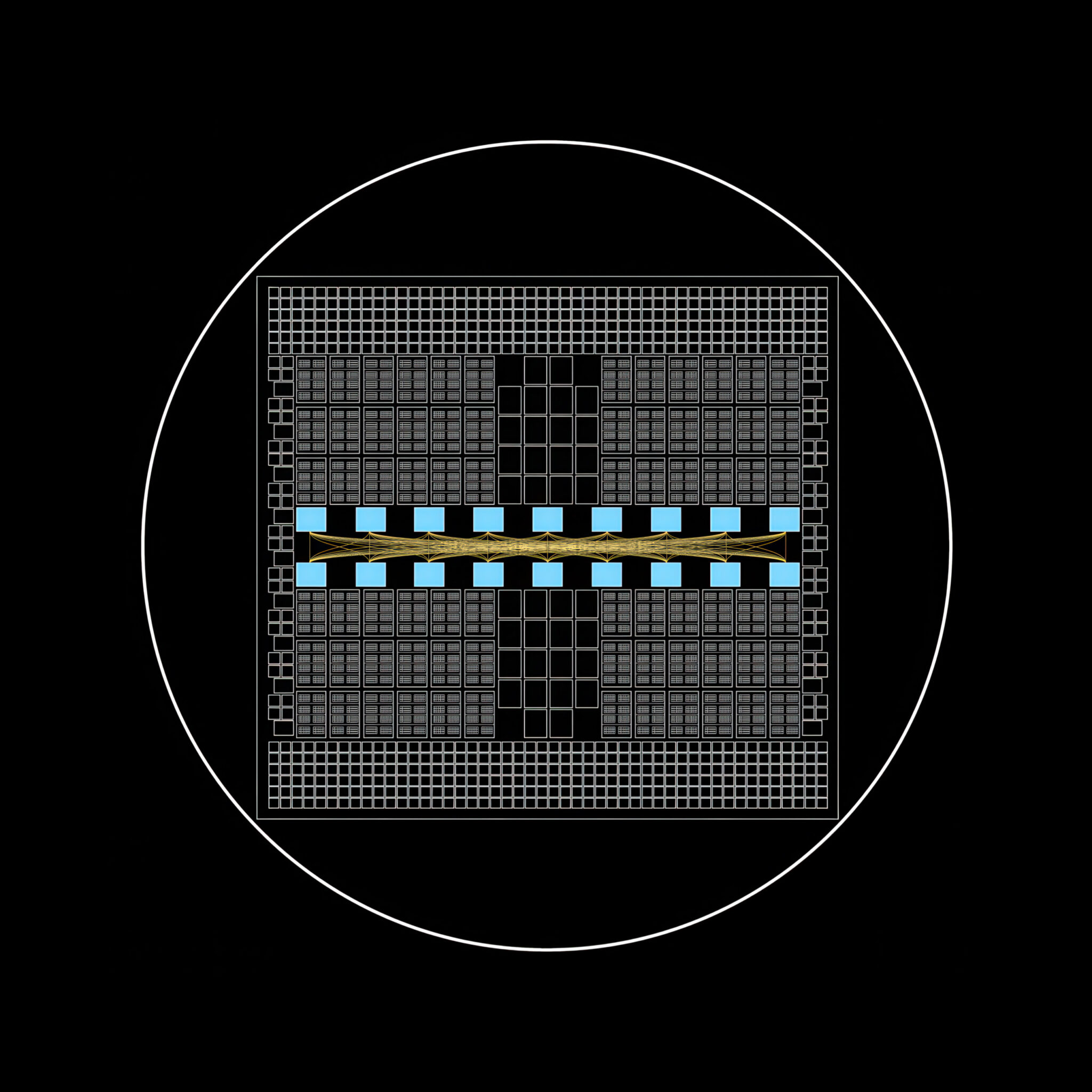

On the core, the NVIDIA Grace Blackwell superchip unites two Blackwell GPUs with one NVIDIA Grace CPU.

Fusing them right into a unified compute module — a superchip — boosts efficiency by an order of magnitude. To take action requires a brand new high-speed interconnect know-how launched with the NVIDIA Hopper structure: NVIDIA NVLink chip-to-chip.

This know-how unlocks seamless communication between the CPU and GPUs, enabling them to share reminiscence instantly, leading to decrease latency and better throughput for AI workloads.

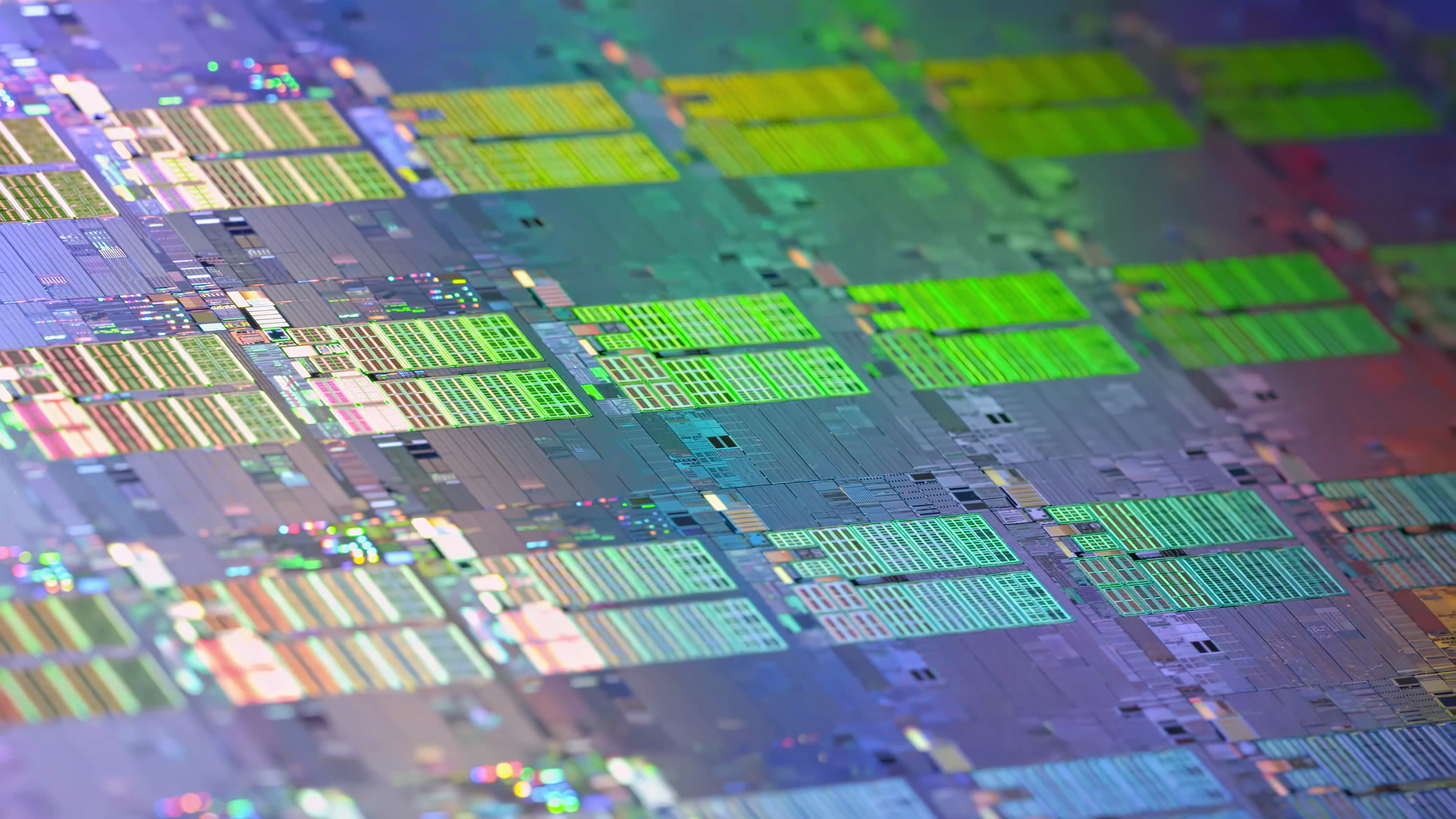

It takes a symphony of creation, reducing, meeting and inspection to construct a superchip.

A New Interconnect for the Superchip Period

Scaling this efficiency throughout a number of superchips with out bottlenecks was inconceivable with earlier networking know-how. So NVIDIA created a brand new sort of interconnect to maintain efficiency bottlenecks from rising and allow AI at scale.

A Spine That Clears Bottlenecks

The NVIDIA NVLink Swap backbone anchors GB200 NVL72 with a exactly engineered net of over 5,000 high-performance copper cables, connecting 72 GPUs throughout 18 compute trays to maneuver information at a staggering 130 TB/s.

That’s quick sufficient to switch the complete web’s peak site visitors in lower than a second.

Two miles of copper wire is exactly minimize, measured, assembled and examined to create the blisteringly quick NVIDIA NVLink Swap backbone.

The backbone cartridge is inspected earlier than set up.

The backbone, powered up, can transfer a complete web’s price of knowledge in lower than a second.

Constructing One Big GPU for Inference

The combination of all this superior {hardware} and software program, compute and networking allows GB200 NVL72 techniques to unlock new prospects for AI at scale.

Every rack weighs one-and-a-half tons — that includes greater than 600,000 components, two miles of wire and tens of millions of strains of code converged.

It acts as one big digital GPU, making factory-scale AI inference potential, the place each nanosecond and watt issues.

GB200 NVL72 In every single place

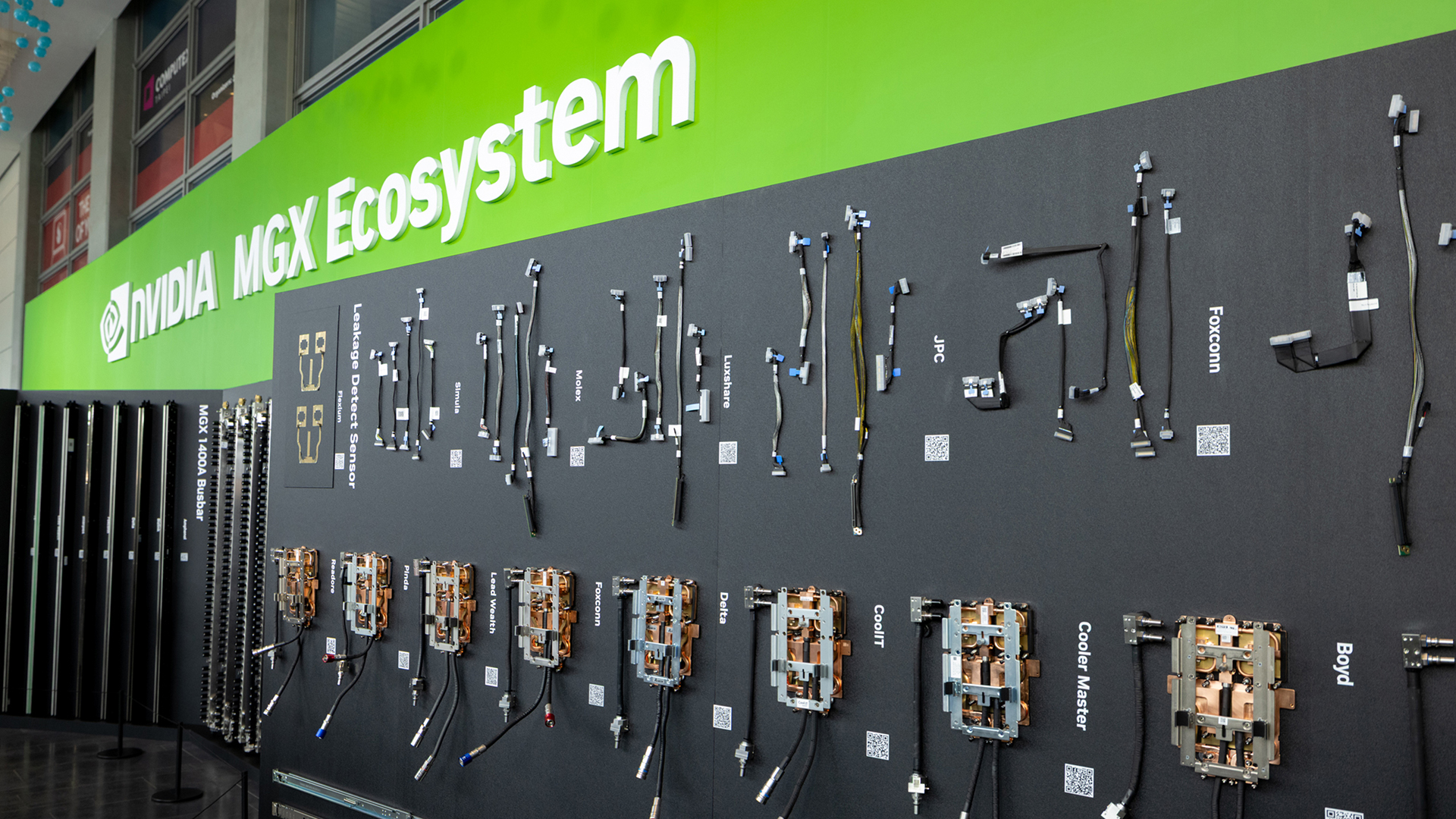

NVIDIA then deconstructed GB200 NVL72 in order that companions and clients can configure and construct their very own NVL72 techniques.

Every NVL72 system is a two-ton, 1.2-million-part supercomputer. NVL72 techniques are manufactured throughout greater than 150 factories worldwide with 200 know-how companions.

From cloud giants to system builders, companions worldwide are producing NVIDIA Blackwell NVL72 techniques.

Time to Scale Out

Tens of 1000’s of Blackwell NVL72 techniques converge to create AI factories.

Working collectively isn’t sufficient. They need to work as one.

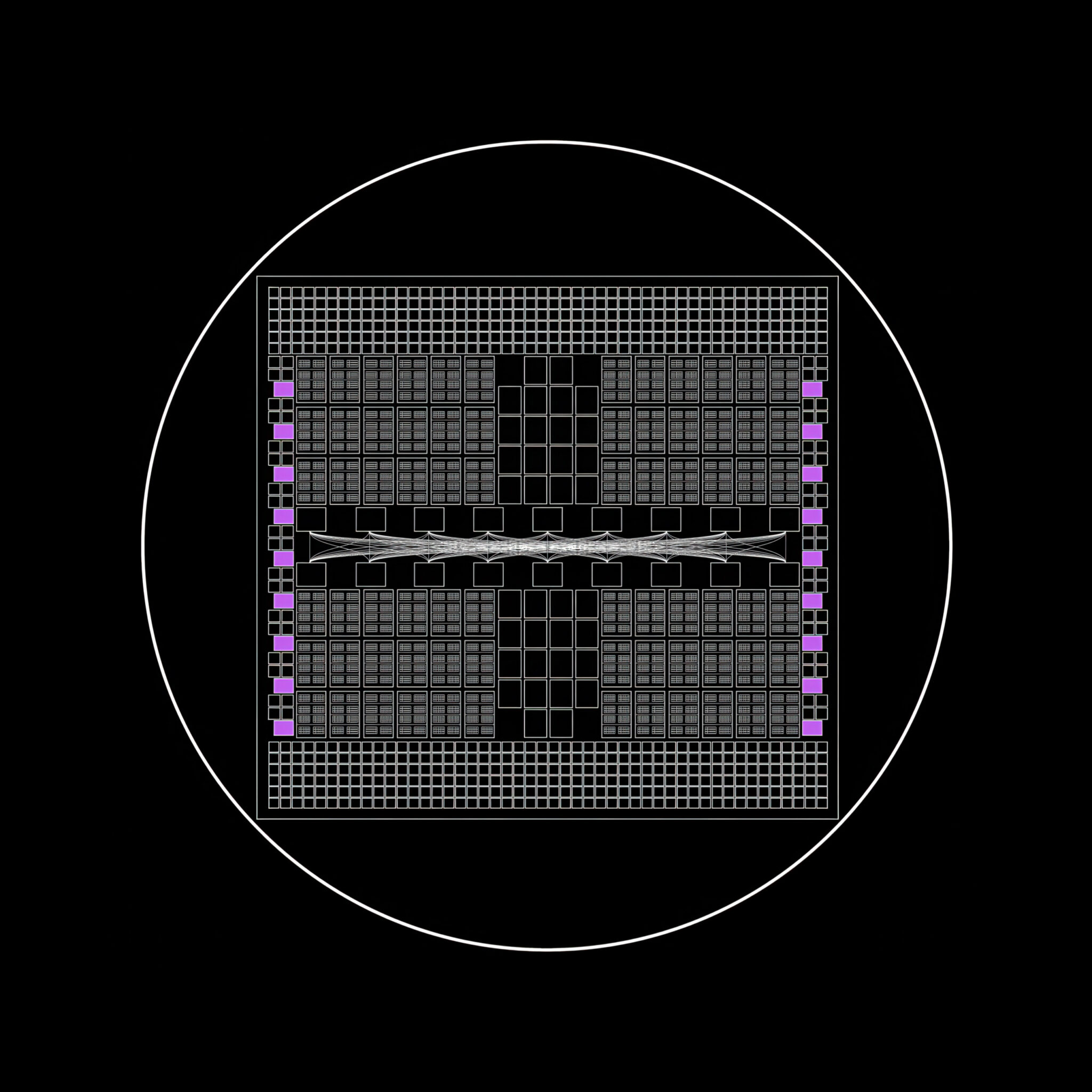

NVIDIA Spectrum-X Ethernet and Quantum-X800 InfiniBand switches make this unified effort potential on the information middle stage.

Every GPU in an NVL72 system is linked on to the manufacturing facility’s information community, and to each different GPU within the system. GB200 NVL72 techniques provide 400 Gbps of Ethernet or InfiniBand interconnect utilizing NVIDIA ConnectX-7 NICs.

NVIDIA Quantum-X800 Swap, NVLink Swap, and Spectrum-X Ethernet unify one or many NVL72 techniques to operate as one.

Opening Strains of Communication

Scaling out AI factories requires many instruments, every in service of 1 factor: unrestricted, parallel communication for each AI workload within the manufacturing facility.

NVIDIA BlueField-3 DPUs do their half to spice up AI efficiency by offloading and accelerating the non-AI duties that maintain the manufacturing facility operating: the symphony of networking, storage and safety.

NVIDIA GB200 NVL72 powers an AI manufacturing facility by CoreWeave, an NVIDIA Cloud Accomplice.

The AI Manufacturing facility Working System

The information middle is now the pc. NVIDIA Dynamo is its working system.

Dynamo orchestrates and coordinates AI inference requests throughout a big fleet of GPUs to make sure that AI factories run on the lowest potential price to maximise productiveness and income.

It could add, take away and shift GPUs throughout workloads in response to surges in buyer use, and route queries to the GPUs finest match for the job.

Colossus, xAI’s AI supercomputer. Created in 122 days, it homes over 200,000 NVIDIA GPUs — an instance of a full-stack, scale-out structure.

Blackwell is greater than a chip. It’s the engine of AI factories.

The world’s largest-planned computing clusters are being constructed on the Blackwell and Blackwell Extremely architectures — with roughly 1,000 racks of NVIDIA GB300 techniques produced every week.

Associated Information