Curiosity in generative AI is continuous to develop, as new fashions embody extra capabilities. With the most recent developments, even fanatics with no developer background can dive proper into tapping these fashions.

With standard functions like Langflow — a low-code, visible platform for designing customized AI workflows — AI fanatics can use easy, no-code person interfaces (UIs) to chain generative AI fashions. And with native integration for Ollama, customers can now create native AI workflows and run them for gratis and with full privateness, powered by NVIDIA GeForce RTX and RTX PRO GPUs.

Visible Workflows for Generative AI

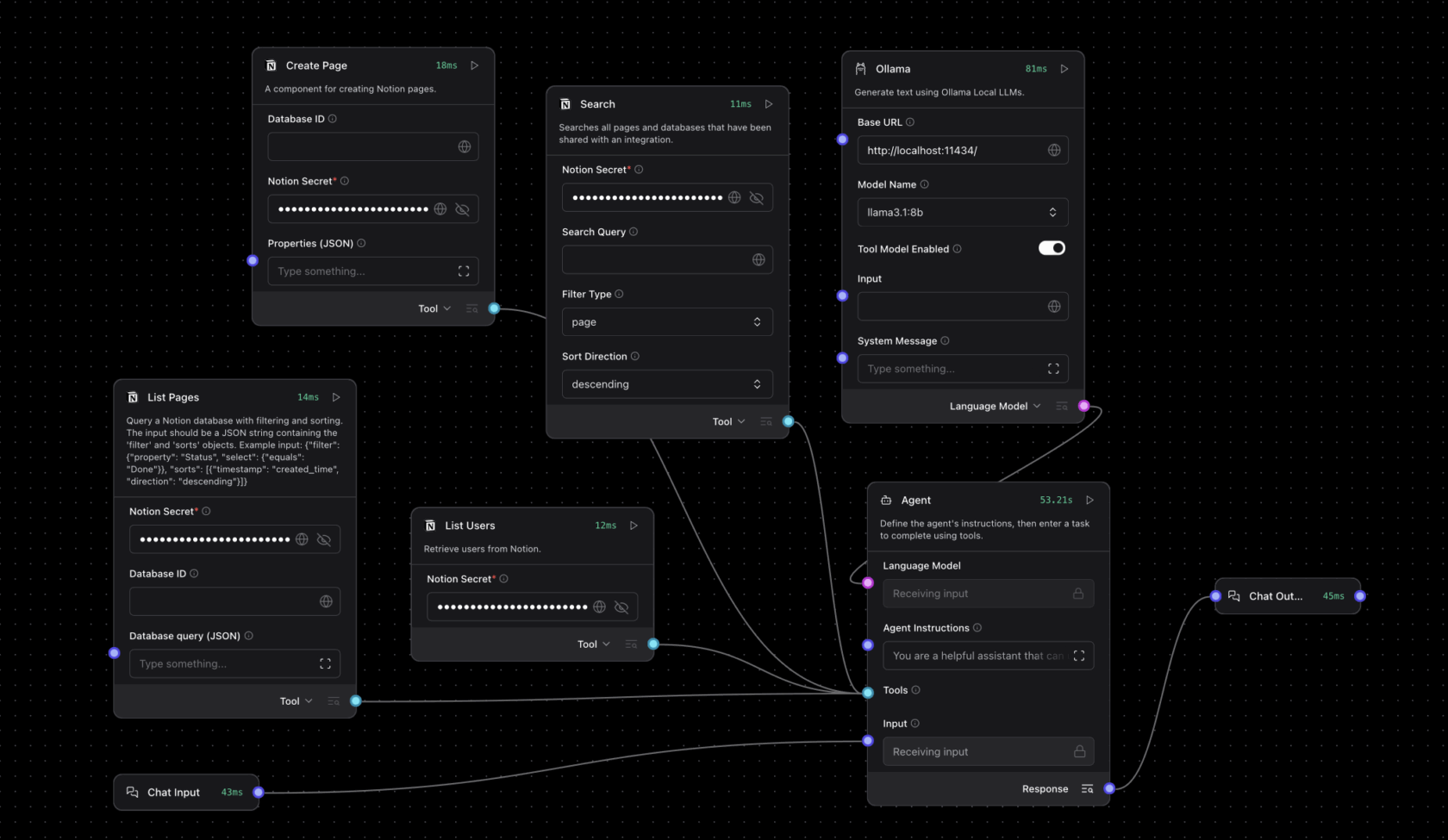

Langflow provides an easy-to-use, canvas-style interface the place parts of generative AI fashions — like massive language fashions (LLMs), instruments, reminiscence shops and management logic — may be related via a easy drag-and-drop UI.

This permits complicated AI workflows to be constructed and modified with out guide scripting, easing the event of brokers able to decision-making and multistep actions. AI fanatics can iterate and construct complicated AI workflows with out prior coding experience.

Not like apps restricted to operating a single-turn LLM question, Langflow can construct superior AI workflows that behave like clever collaborators, able to analyzing recordsdata, retrieving information, executing capabilities and responding contextually to dynamic inputs.

Langflow can run fashions from the cloud or domestically — with full acceleration for RTX GPUs via Ollama. Working workflows domestically offers a number of key advantages:

- Knowledge privateness: Inputs, recordsdata and prompts stay confined to the system.

- Low prices and no API keys: As cloud software programming interface entry will not be required, there aren’t any token restrictions, service subscriptions or prices related to operating the AI fashions.

- Efficiency: RTX GPUs allow low-latency, high-throughput inference, even with lengthy context home windows.

- Offline performance: Native AI workflows are accessible with out the web.

Creating Native Brokers With Langflow and Ollama

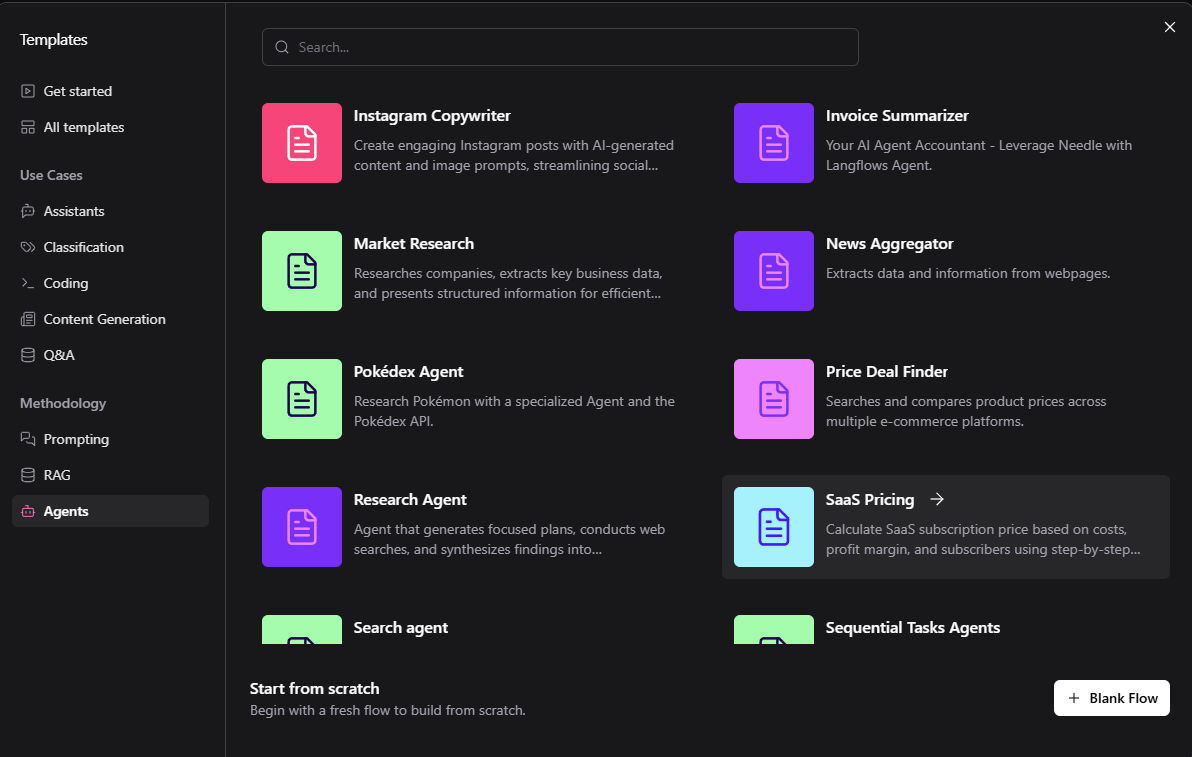

Getting began with Ollama inside Langflow is easy. Constructed-in starters can be found to be used instances starting from journey brokers to buy assistants. The default templates usually run within the cloud for testing, however they are often custom-made to run domestically on RTX GPUs with Langflow.

To construct a neighborhood workflow:

- Set up the Langflow desktop app for Home windows.

- Set up Ollama, then run Ollama and launch the popular mannequin (Llama 3.1 8B or Qwen3 4B really useful for customers’ first workflow).

- Run Langflow and choose a starter.

- Change cloud endpoints with native Ollama runtime. For agentic workflows, set the language mannequin to Customized, drag an Ollama node to the canvas and join the agent node’s customized mannequin to the Language Mannequin output of the Ollama node.

Templates may be modified and expanded — reminiscent of by including system instructions, native file search or structured outputs — to satisfy superior automation and assistant use instances.

Watch this step-by-step walkthrough from the Langflow group:

Get Began

Under are two pattern tasks to begin exploring.

Create a private journey itinerary agent: Enter all journey necessities — together with desired restaurant reservations, vacationers’ dietary restrictions and extra — to routinely discover and prepare lodging, transport, meals and leisure.

Develop Notion’s capabilities: Notion, an AI workspace software for organizing tasks, may be expanded with AI fashions that routinely enter assembly notes, replace the standing of tasks primarily based on Slack chats or electronic mail, and ship out undertaking or assembly summaries.

RTX Remix Provides Mannequin Context Protocol, Unlocking Agent Mods

RTX Remix — an open-source platform that enables modders to reinforce supplies with generative AI instruments and create gorgeous RTX remasters that characteristic full ray tracing and neural rendering applied sciences — is including assist for Mannequin Context Protocol (MCP) with Langflow.

Langflow nodes with MCP give customers a direct interface for working with RTX Remix — enabling modders to construct modding assistants able to intelligently interacting with Remix documentation and mod capabilities.

To assist modders get began, NVIDIA’s Langflow Remix template contains:

- A retrieval-augmented technology module with RTX Remix documentation.

- Actual-time entry to Remix documentation for Q&A-style assist.

- An motion nodule through MCP that helps direct perform execution inside RTX Remix, together with asset substitute, metadata updates and automatic mod interactions.

Modding assistant brokers constructed with this template can decide whether or not a question is informational or action-oriented. Based mostly on context, brokers dynamically reply with steering or take the requested motion. For instance, a person may immediate the agent: “Swap this low-resolution texture with a higher-resolution model.” In response, the agent would examine the asset’s metadata, find an applicable substitute and replace the undertaking utilizing MCP capabilities — with out requiring guide interplay.

Documentation and setup directions for the Remix template can be found within the RTX Remix developer information.

Management RTX AI PCs With Challenge G-Help in Langflow

NVIDIA Challenge G-Help is an experimental, on-device AI assistant that runs domestically on GeForce RTX PCs. It permits customers to question system data (e.g. PC specs, CPU/GPU temperatures, utilization), alter system settings and extra — all via easy pure language prompts.

With the G-Help element in Langflow, these capabilities may be constructed into customized agentic workflows. Customers can immediate G-Help to “get GPU temperatures” or “tune fan speeds” — and its response and actions will movement via their chain of parts.

Past diagnostics and system management, G-Help is extensible through its plug-in structure, which permits customers so as to add new instructions tailor-made to their workflows. Group-built plug-ins can be invoked straight from Langflow workflows.

To get began with the G-Help element in Langflow, learn the developer documentation.

Langflow can also be a improvement device for NVIDIA NeMo microservices, a modular platform for constructing and deploying AI workflows throughout on-premises or cloud Kubernetes environments.

With built-in assist for Ollama and MCP, Langflow provides a sensible no-code platform for constructing real-time AI workflows and brokers that run absolutely offline and on system, all accelerated by NVIDIA GeForce RTX and RTX PRO GPUs.

Every week, the RTX AI Storage weblog sequence options community-driven AI improvements and content material for these trying to be taught extra about NVIDIA NIM microservices and AI Blueprints, in addition to constructing AI brokers, inventive workflows, productiveness apps and extra on AI PCs and workstations.

Plug in to NVIDIA AI PC on Fb, Instagram, TikTok and X — and keep knowledgeable by subscribing to the RTX AI PC publication. Be part of NVIDIA’s Discord server to attach with neighborhood builders and AI fanatics for discussions on what’s potential with RTX AI.

Observe NVIDIA Workstation on LinkedIn and X.

See discover concerning software program product data.