Quantum computing guarantees to reshape industries — however progress hinges on fixing key issues. Error correction. Simulations of qubit designs. Circuit compilation optimization duties. These are among the many bottlenecks that have to be overcome to convey quantum {hardware} into the period of helpful purposes.

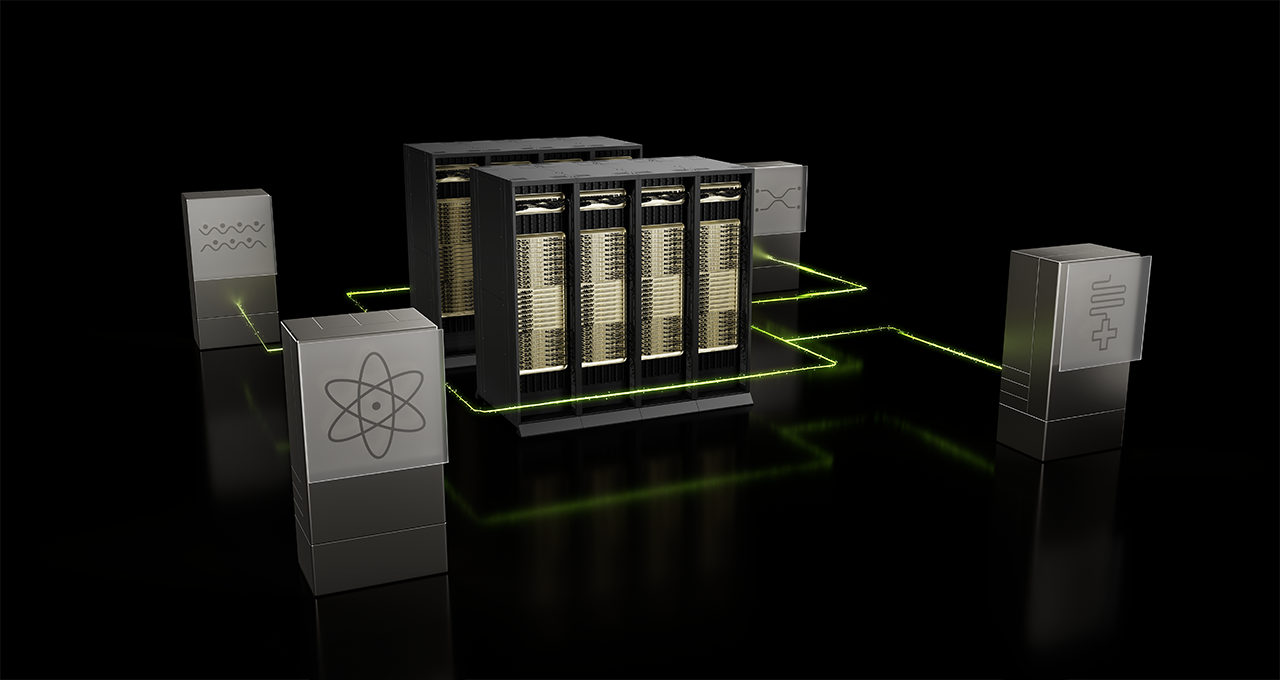

Enter accelerated computing. The parallel processing of accelerated computing gives the ability wanted to make the quantum computing breakthroughs of as we speak and tomorrow potential.

NVIDIA CUDA-X libraries kind the spine of quantum analysis. From sooner decoding of quantum errors to designing bigger programs of qubits, researchers are utilizing GPU-accelerated instruments to develop classical computation and convey helpful quantum purposes nearer to actuality.

Accelerating Quantum Error Correction Decoders With NVIDIA CUDA-Q QEC and cuDNN

Quantum error correction (QEC) is a key approach for working with unavoidable noise in quantum processors. It’s how researchers distill 1000’s of noisy bodily qubits right into a handful of noiseless, logical ones by decoding information in actual time, recognizing and correcting errors as they emerge.

Among the many most promising approaches to QEC are quantum low-density parity-check (qLDPC) codes, which might mitigate errors with low qubit overhead. However decoding them requires computationally costly typical algorithms operating at extraordinarily low latency with very excessive throughput.

The Quantum Software program Lab, hosted on the College of Informatics on the College of Edinburgh, used the NVIDIA CUDA-Q QEC library to construct a brand new qLDPC decoding technique known as AutoDEC — and noticed a 2x enhance in pace and accuracy. It was developed utilizing CUDA-Q’s GPU-accelerated BP-OSD decoding performance, which parallelizes the decoding course of, growing the percentages that error correction works.

In a separate collaboration with QuEra, the NVIDIA PhysicsNeMo framework and cuDNN library had been used to develop an AI decoder with a transformer structure. AI strategies provide a promising means to scale decoding to the larger-distance codes wanted in future quantum computer systems. These codes enhance error correction — however they arrive with a steep computational price.

AI fashions can frontload the computationally intensive parts of the workloads by coaching forward of time and operating extra environment friendly inference at runtime. Utilizing an AI mannequin developed with NVIDIA CUDA-Q, QuEra achieved a 50x enhance in decoding pace — together with improved accuracy.

Optimizing Quantum Circuit Compilation With cuDF

A method to enhance an algorithm that works even with out QEC is to compile it to the highest-quality qubits on a processor. The method of mapping qubits in an summary quantum circuit to a bodily format of qubits on a chip is tied to a particularly computationally difficult drawback often called graph isomorphism.

In collaboration with Q-CTRL and Oxford Quantum Circuits, NVIDIA developed a GPU-accelerated format choice technique known as ∆-Motif, offering as much as a 600x speedup in purposes like quantum compilation, which contain graph isomorphism. To scale this method, NVIDIA and collaborators used cuDF — a GPU-accelerated information science library — to carry out graph operations and assemble potential layouts with predefined patterns (aka “motifs”) primarily based on the bodily quantum chip format.

These layouts might be constructed effectively and in parallel by merging motifs, enabling GPU acceleration in graph isomorphism issues for the primary time.

Accelerating Excessive-Constancy Quantum System Simulation With cuQuantum

Numerical simulation of quantum programs is vital for understanding the physics of quantum units — and for creating higher qubit designs. QuTiP, a extensively used open-source toolkit, is a workhorse for understanding the noise sources current in quantum {hardware}.

A key use case is the high-fidelity simulation of open quantum programs, resembling modeling superconducting qubits coupled with different parts throughout the quantum processor, like resonators and filters, to precisely predict gadget habits.

By a collaboration with the College of Sherbrooke and Amazon Net Companies (AWS), QuTiP was built-in with the NVIDIA cuQuantum software program improvement equipment through a brand new QuTiP plug-in known as qutip-cuquantum. AWS supplied the GPU-accelerated Amazon Elastic Compute Cloud (Amazon EC2) compute infrastructure for the simulation. For big programs, researchers noticed as much as a 4,000x efficiency enhance when finding out a transmon qubit coupled with a resonator.

Be taught extra concerning the NVIDIA CUDA-Q platform. Learn this NVIDIA technical weblog for extra particulars on how CUDA-Q powers quantum purposes analysis.

Discover quantum computing periods at NVIDIA GTC Washington, D.C, operating Oct. 27-29.