Generative AI is remodeling PC software program into breakthrough experiences — from digital people to writing assistants, clever brokers and artistic instruments.

NVIDIA RTX AI PCs are powering this transformation with expertise that makes it easier to get began experimenting with generative AI and unlock higher efficiency on Home windows 11.

NVIDIA TensorRT has been reimagined for RTX AI PCs, combining industry-leading TensorRT efficiency with just-in-time, on-device engine constructing and an 8x smaller bundle measurement for seamless AI deployment to greater than 100 million RTX AI PCs.

Introduced at Microsoft Construct, TensorRT for RTX is natively supported by Home windows ML — a brand new inference stack that gives app builders with each broad {hardware} compatibility and state-of-the-art efficiency.

For builders on the lookout for AI options able to combine, NVIDIA software program growth kits (SDKs) provide a big selection of choices, from NVIDIA DLSS to multimedia enhancements like NVIDIA RTX Video. This month, high software program purposes from Autodesk, Bilibili, Chaos, LM Studio and Topaz Labs are releasing updates to unlock RTX AI options and acceleration.

AI lovers and builders can simply get began with AI utilizing NVIDIA NIM — prepackaged, optimized AI fashions that may run in standard apps like AnythingLLM, Microsoft VS Code and ComfyUI. Releasing this week, the FLUX.1-schnell picture era mannequin will probably be obtainable as a NIM microservice, and the favored FLUX.1-dev NIM microservice has been up to date to help extra RTX GPUs.

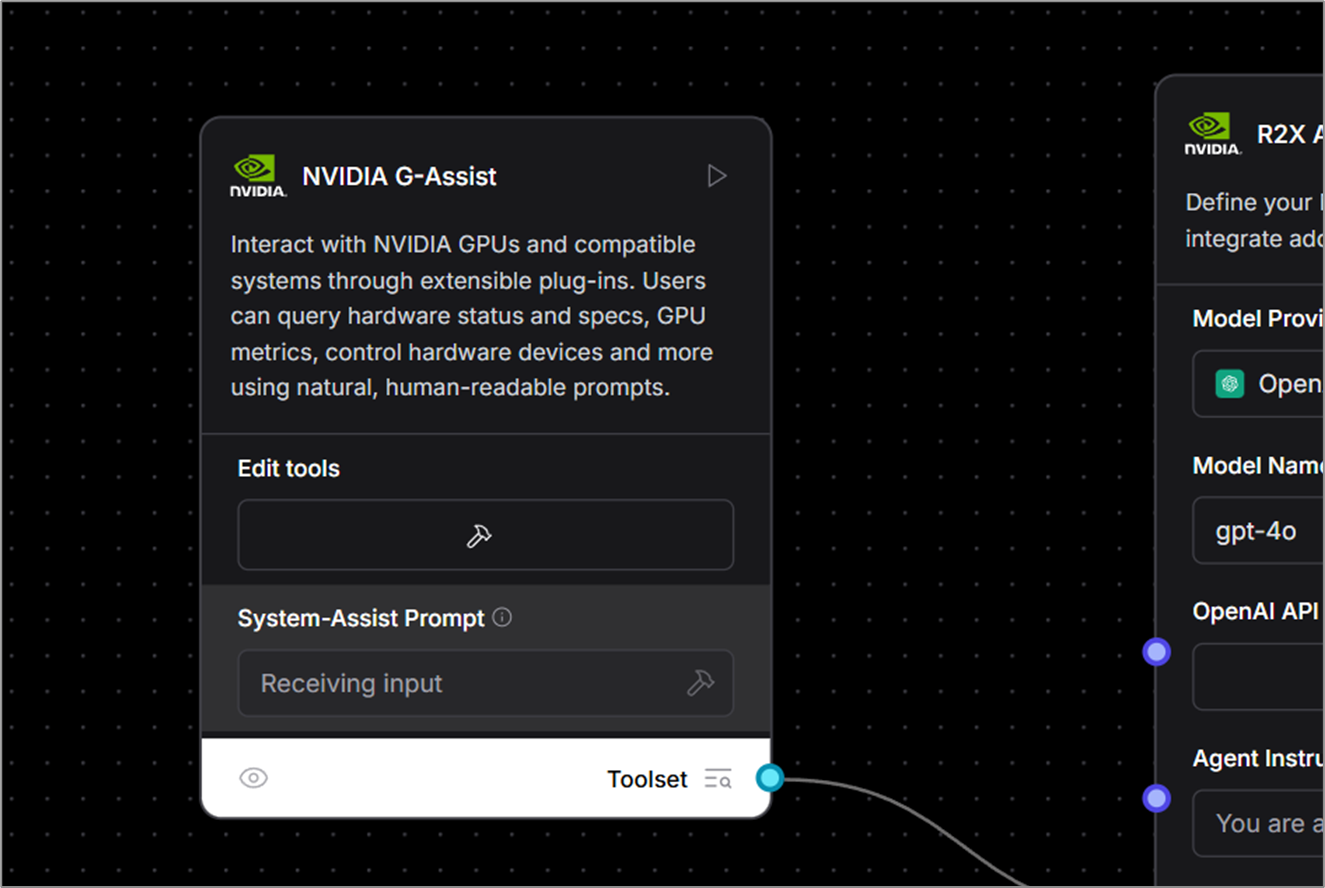

These on the lookout for a easy, no-code technique to dive into AI growth can faucet into Undertaking G-Help — the RTX PC AI assistant within the NVIDIA app — to construct plug-ins to regulate PC apps and peripherals utilizing pure language AI. New neighborhood plug-ins are actually obtainable, together with Google Gemini internet search, Spotify, Twitch, IFTTT and SignalRGB.

Accelerated AI Inference With TensorRT for RTX

Immediately’s AI PC software program stack requires builders to compromise on efficiency or put money into customized optimizations for particular {hardware}.

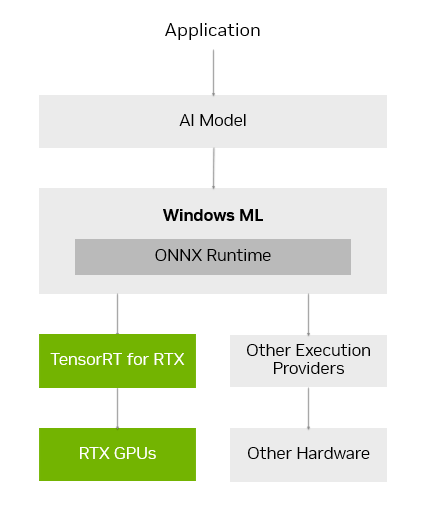

Home windows ML was constructed to unravel these challenges. Home windows ML is powered by ONNX Runtime and seamlessly connects to an optimized AI execution layer supplied and maintained by every {hardware} producer.

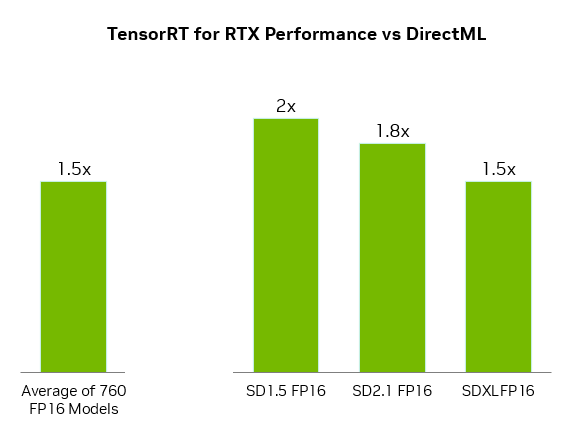

For GeForce RTX GPUs, Home windows ML routinely makes use of the TensorRT for RTX inference library for prime efficiency and speedy deployment. In contrast with DirectML, TensorRT delivers over 50% sooner efficiency for AI workloads on PCs.

Home windows ML additionally delivers quality-of-life advantages for builders. It might probably routinely choose the fitting {hardware} — GPU, CPU or NPU — to run every AI characteristic, and obtain the execution supplier for that {hardware}, eradicating the necessity to bundle these recordsdata into the app. This permits for the newest TensorRT efficiency optimizations to be delivered to customers as quickly as they’re prepared.

TensorRT, a library initially constructed for information facilities, has been redesigned for RTX AI PCs. As a substitute of pre-generating TensorRT engines and packaging them with the app, TensorRT for RTX makes use of just-in-time, on-device engine constructing to optimize how the AI mannequin is run for the consumer’s particular RTX GPU in mere seconds. And the library’s packaging has been streamlined, lowering its file measurement considerably by 8x.

TensorRT for RTX is offered to builders by the Home windows ML preview in the present day, and will probably be obtainable as a standalone SDK at NVIDIA Developer in June.

Builders can study extra within the TensorRT for RTX launch weblog or Microsoft’s Home windows ML weblog.

Increasing the AI Ecosystem on Home windows 11 PCs

Builders wanting so as to add AI options or enhance app efficiency can faucet right into a broad vary of NVIDIA SDKs. These embrace NVIDIA CUDA and TensorRT for GPU acceleration; NVIDIA DLSS and Optix for 3D graphics; NVIDIA RTX Video and Maxine for multimedia; and NVIDIA Riva and ACE for generative AI.

Prime purposes are releasing updates this month to allow distinctive options utilizing these NVIDIA SDKs, together with:

- LM Studio, which launched an replace to its app to improve to the newest CUDA model, growing efficiency by over 30%.

- Topaz Labs, which is releasing a generative AI video mannequin to boost video high quality, accelerated by CUDA.

- Chaos Enscape and Autodesk VRED, that are including DLSS 4 for sooner efficiency and higher picture high quality.

- Bilibili, which is integrating NVIDIA Broadcast options akin to Digital Background to boost the standard of livestreams.

NVIDIA seems ahead to persevering with to work with Microsoft and high AI app builders to assist them speed up their AI options on RTX-powered machines by the Home windows ML and TensorRT integration.

Native AI Made Straightforward With NIM Microservices and AI Blueprints

Getting began with growing AI on PCs could be daunting. AI builders and lovers have to pick from over 1.2 million AI fashions on Hugging Face, quantize it right into a format that runs nicely on PC, discover and set up all of the dependencies to run it, and extra.

NVIDIA NIM makes it straightforward to get began by offering a curated checklist of AI fashions, prepackaged with all of the recordsdata wanted to run them and optimized to realize full efficiency on RTX GPUs. And since they’re containerized, the identical NIM microservice could be run seamlessly throughout PCs or the cloud.

NVIDIA NIM microservices can be found to obtain by construct.nvidia.com or by high AI apps like Something LLM, ComfyUI and AI Toolkit for Visible Studio Code.

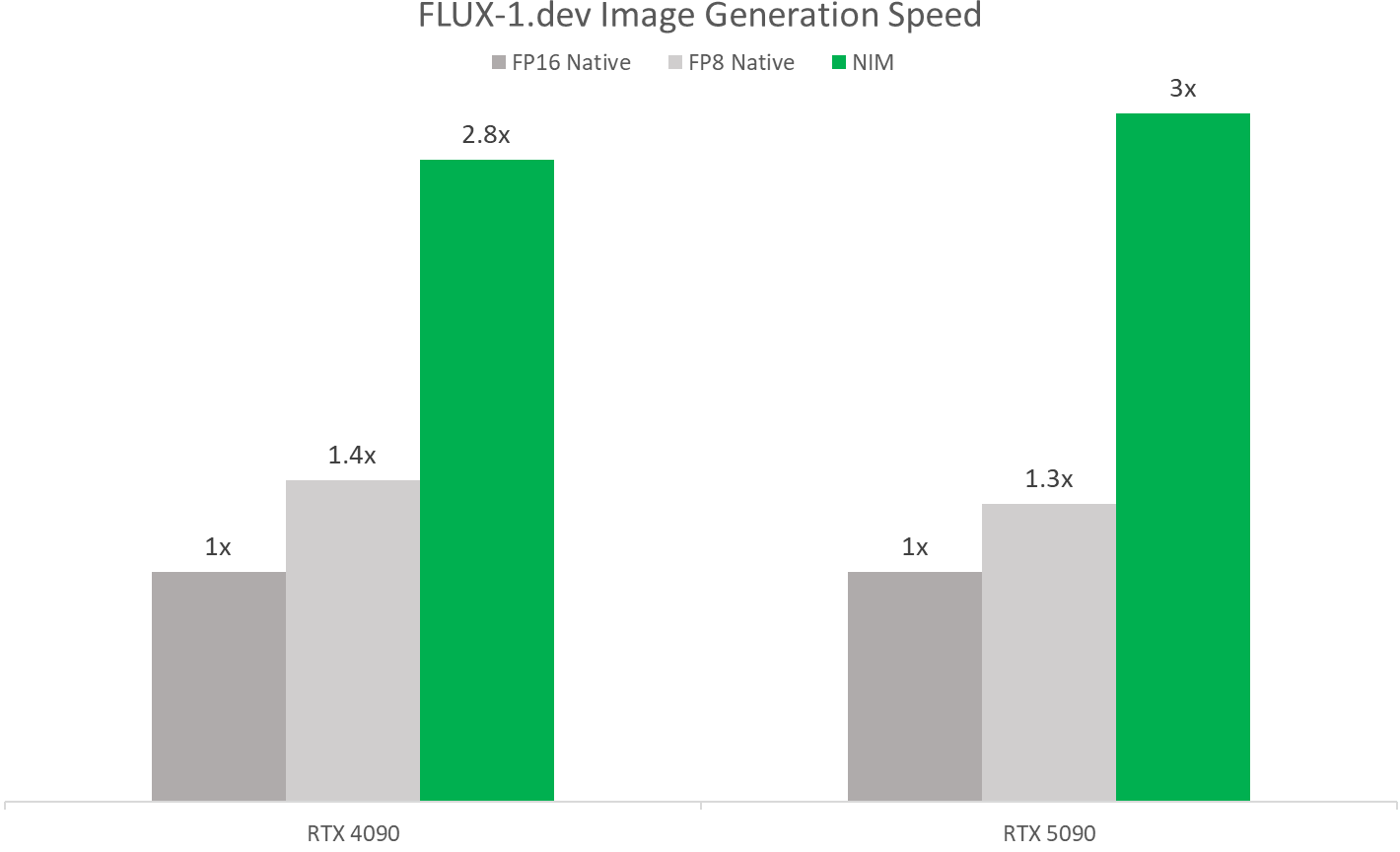

Throughout COMPUTEX, NVIDIA will launch the FLUX.1-schnell NIM microservice — a picture era mannequin from Black Forest Labs for quick picture era — and replace the FLUX.1-dev NIM microservice so as to add compatibility for a variety of GeForce RTX 50 and 40 Sequence GPUs.

These NIM microservices allow sooner efficiency with TensorRT and quantized fashions. On NVIDIA Blackwell GPUs, they run over twice as quick as operating them natively, because of FP4 and RTX optimizations.

AI builders may also jumpstart their work with NVIDIA AI Blueprints — pattern workflows and initiatives utilizing NIM microservices.

NVIDIA final month launched the NVIDIA AI Blueprint for 3D-guided generative AI, a strong technique to management composition and digicam angles of generated photos by utilizing a 3D scene as a reference. Builders can modify the open-source blueprint for his or her wants or prolong it with further performance.

New Undertaking G-Help Plug-Ins and Pattern Initiatives Now Obtainable

NVIDIA just lately launched Undertaking G-Help as an experimental AI assistant built-in into the NVIDIA app. G-Help allows customers to regulate their GeForce RTX system utilizing easy voice and textual content instructions, providing a extra handy interface in comparison with handbook controls unfold throughout quite a few legacy management panels.

Builders may also use Undertaking G-Help to simply construct plug-ins, take a look at assistant use instances and publish them by NVIDIA’s Discord and GitHub.

The Undertaking G-Help Plug-in Builder — a ChatGPT-based app that permits no-code or low-code growth with pure language instructions — makes it straightforward to begin creating plug-ins. These light-weight, community-driven add-ons use simple JSON definitions and Python logic.

New open-source plug-in samples can be found now on GitHub, showcasing numerous methods on-device AI can improve PC and gaming workflows. They embrace:

- Gemini: The present Gemini plug-in that makes use of Google’s cloud-based free-to-use giant language mannequin has been up to date to incorporate real-time internet search capabilities.

- IFTTT: A plug-in that lets customers create automations throughout lots of of suitable endpoints to set off IoT routines — akin to adjusting room lights or sensible shades, or pushing the newest gaming information to a cell machine.

- Discord: A plug-in that permits customers to simply share recreation highlights or messages on to Discord servers with out disrupting gameplay.

Discover the GitHub repository for extra examples — together with hands-free music management by way of Spotify, livestream standing checks with Twitch, and extra.

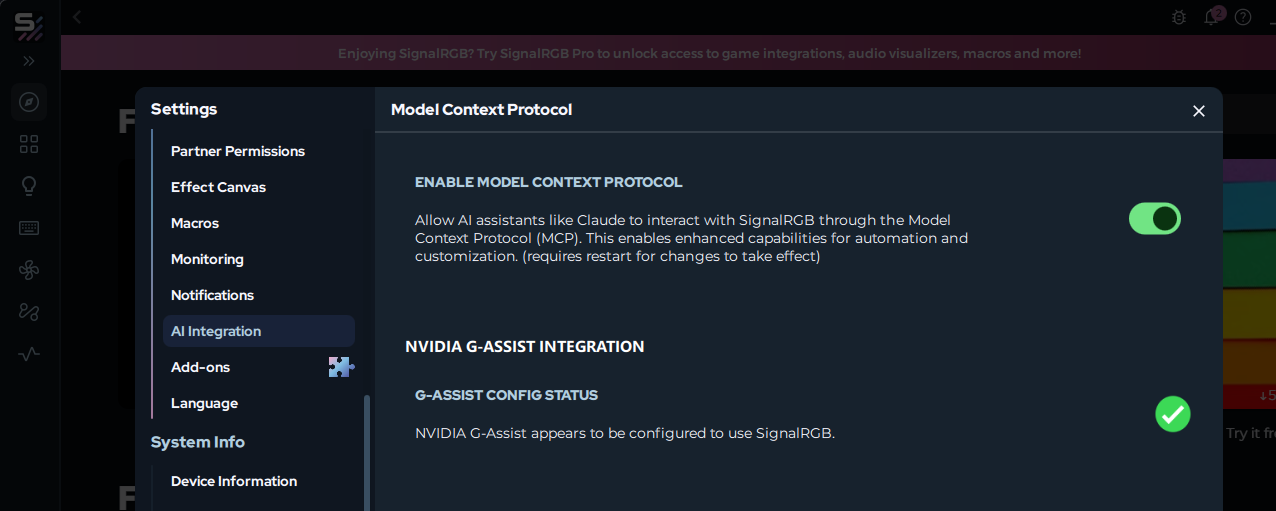

Firms are adopting AI as the brand new PC interface. For instance, SignalRGB is growing a G-Help plug-in that permits unified lighting management throughout a number of producers. Customers will quickly be capable to set up this plug-in straight from the SignalRGB app.

Beginning this week, the AI neighborhood may also be capable to use G-Help as a customized part in Langflow — enabling customers to combine function-calling capabilities in low-code or no-code workflows, AI purposes and agentic flows.

Fanatics inquisitive about growing and experimenting with Undertaking G-Help plug-ins are invited to affix the NVIDIA Developer Discord channel to collaborate, share creations and acquire help.

Every week, the RTX AI Storage weblog collection options community-driven AI improvements and content material for these seeking to study extra about NIM microservices and AI Blueprints, in addition to constructing AI brokers, artistic workflows, digital people, productiveness apps and extra on AI PCs and workstations.

Plug in to NVIDIA AI PC on Fb, Instagram, TikTok and X — and keep knowledgeable by subscribing to the RTX AI PC e-newsletter.

Observe NVIDIA Workstation on LinkedIn and X.

See discover concerning software program product info.